Once a study design is chosen, many researchers feel a sense of relief. The hard thinking, they assume, is mostly done. What follows seems technical – measurement in research.

In reality, this is where a quieter but more consequential decision takes over: defining variables, collecting data, running analyses.

What you choose to measure does not just determine what you will find.

It determines what you will never see—no matter how large your sample or how sophisticated your statistics are.

Measurement is not neutral

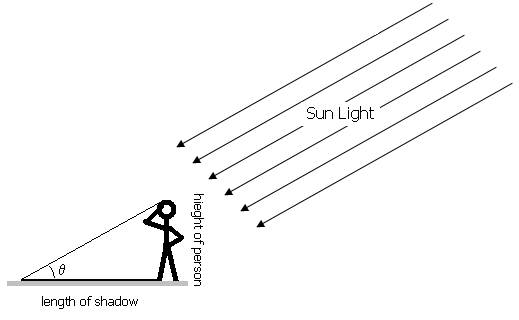

Measurements are often treated as neutral tools. Blood pressure, lab values, imaging distances, survey scores—these appear objective, almost mechanical. But every measurement is a decision about what counts as reality in your study.

When you select an outcome, you are deciding:

- which aspect of the phenomenon matters

- which variation becomes visible

- which experiences are reduced to numbers

- which dimensions disappear entirely

These decisions are rarely framed this way, yet they shape the meaning of the study more than most analytic choices.

Two studies can examine the same condition and reach very different conclusions simply because they chose to measure different things.

When two studies measure “success” differently

Recently, I reviewed literature on outcomes after hypospadias repair to prepare for a paper. Two studies examined the same technique, similar age groups, and comparable sample sizes.

| Study A defined success as: – urethral fistula (yes/no) – meatal stenosis requiring dilation (yes/no) – reoperation within six months | Study B defined success as: – cosmetic appearance scores – urinary stream quality (parent-reported) – child discomfort during urination parent satisfaction |

Both studies were methodologically sound. Both claimed to study “surgical outcomes.” Yet their conclusions diverged. Study A concluded the technique was highly successful. Study B raised concerns about function and appearance.

Neither study was wrong. They were measuring different aspects of the same phenomenon. The choice of measurement determined what counted as success.

Proxies and the quiet loss of meaning

In real-world research, we often measure what is available, not what we truly care about.

Quality of life becomes a questionnaire score.

Disease severity becomes a lab value.

Clinical improvement becomes length of stay.

These proxies are not incorrect—but they are approximations. And every approximation loses something.

The problem arises when we forget what has been lost. At that point, the proxy quietly replaces the concept itself.

A lesson from my thesis: measuring a slice as if it were the whole

My thesis examined imaging methods for classifying anorectal malformations (ARM) to guide surgical planning.

The most convenient measurement was the puborectalis–rectal (PR) distance on ultrasound. It was standardized, quantifiable, and easy to extract retrospectively.

PR distance seemed ideal.

But it captured only one dimension of a three-dimensional malformation.

A child could have a “normal” PR distance and still have unfavorable fistula anatomy. Another could fall near the cutoff yet have well-developed muscles and a relatively straightforward operation.

I wasn’t measuring anorectal malformation.

I was measuring one slice of it—and quietly treating that slice as the whole.

When my supervisor asked whether ultrasound alone was sufficient for surgical planning, I realized I couldn’t answer. I had conflated one measurement with complete anatomical understanding.

The solution wasn’t abandoning PR distance. It was recognizing its limits and supplementing it with other findings: fistula anatomy, muscle development, sacral ratio.

Together, these measurements told a meaningful story. Separately, each told only part of it.

When outcomes are chosen too late

A common workflow error is treating outcomes as a downstream detail.

The design is chosen first. Data availability is confirmed. Only then do we ask what to measure.

By that point, outcomes are often constrained by convenience rather than relevance.

I initially framed my question around what was easiest to extract:

“Does PR distance predict surgical type?”

What I actually cared about was different:

“Which imaging findings help surgeons plan the operation?”

Once I reversed the order—starting with the real question and then asking what needed to be measured—the study became harder to execute, but far easier to interpret.

Measurement creates blind spots

Every outcome highlights one aspect of reality while obscuring others.

Short-term outcomes hide long-term effects.

Anatomical measures erase lived experience.

Easily quantified variables sideline complexity.

These blind spots are not mistakes. They are the cost of choosing one way of seeing over another.

The danger lies in forgetting that these blind spots exist.

One exercise I now do early is to write a simple list:

What this study can answer

What this study cannot answerDoing this before data collection prevents overinterpretation later—and makes the discussion section more honest, not weaker.

Thinking about interpretation before measurement

Before finalizing an outcome, I now ask:

- If this shows no difference, what would that actually mean?

- If it shows a difference, what conclusions would still be unjustified?

- What important questions would remain unanswered either way?

I sometimes use AI to stress-test this thinking—not to design measurements, but to surface assumptions I might overlook when working alone.

That short exercise often reveals where I’m treating a proxy as if it were the phenomenon itself.

Measurement as a commitment

Just like study design, measurement is a commitment. Once chosen, you are committing to one way of understanding the phenomenon—and to ignoring other dimensions, intentionally or not.

Many reviewer critiques are not really about statistics. They are about meaning:

- Is this outcome clinically relevant?

- Does it capture what actually matters?

- Are you measuring the right thing, or just the convenient thing?

These questions cannot be fixed during analysis. They are decided much earlier.

What comes next

Even with careful measurement, another problem remains.

Bias does not enter only during analysis. It enters through who gets included, what gets recorded, and what gets ignored—long before the first data point is collected.

That is where many otherwise careful studies quietly go wrong.

And that is where we turn next.