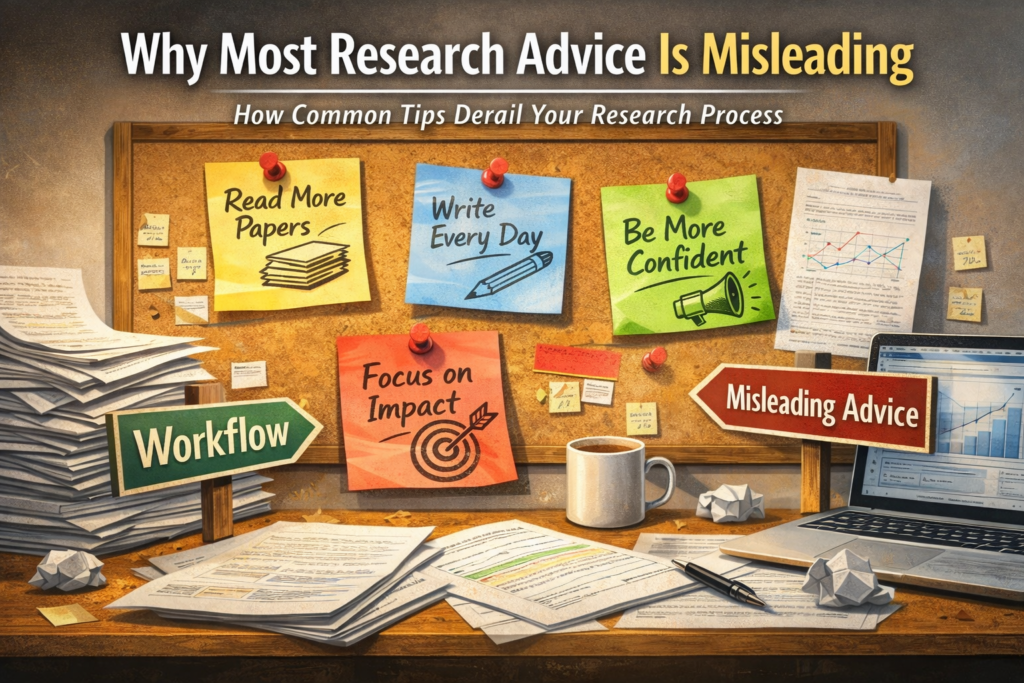

Early in my training, I absorbed endless research advice:

“Read more papers.”

“Write every day.”

“Focus on impact.”

“Be more confident.”

The advice sounded reasonable. I followed it carefully. My productivity collapsed.

After several failed attempts, I realized the problem wasn’t discipline or intelligence. Most research advice is structurally misleading—not because it’s wrong in principle, but because it ignores how research actually progresses.

The Problem with “Read More Papers”

This is the most common advice researchers receive. The logic seems obvious: more reading leads to more knowledge, which leads to better research.

In practice, reading without boundaries produces the opposite:

- endless reference chasing

- permanent “not ready yet” paralysis

- delayed writing because “I still need to read more”

I’ve seen this repeatedly—in myself and in colleagues.

Reading isn’t the problem. The problem is that “read more” offers no stopping rule. And without boundaries, research doesn’t move forward.

The Problem with “Write Every Day”

This advice comes straight from productivity culture. Consistency, we’re told, guarantees progress. But research writing doesn’t work that way. Research writing is not linear accumulation. It depends on moments when interpretation stabilizes.

Writing too early doesn’t accelerate progress. It creates false momentum—text that feels productive but later has to be dismantled.

In most stalled projects, the bottleneck isn’t lack of writing. It’s writing before the logic is ready, then paying the cost during revision.

The Problem with “Focus on Impact”

This advice dominates grant workshops and mentorship sessions.

“Aim high.”

“Emphasize novelty.”

“Target top journals.”

In practice, this produces two predictable failures:

- Overreach during study design

Complexity is added to chase novelty—beyond what the data can support. - Overstatement during interpretation

Claims exceed evidence, triggering reviewer resistance.

Both failures come from optimizing for “impact” instead of publishability under constraints. Impact is an outcome of execution, not a design principle.

The Problem with “Be More Confident”

This advice rests on a misdiagnosis. When a paper feels weak, people assume the author lacks confidence. In reality, the issue is usually lack of positioning.

A fragile discussion doesn’t mean the author doubts their work. It means they haven’t clearly defined:

- what the study shows—and what it doesn’t

- where it fits relative to existing literature

- how reviewers should evaluate the contribution

Telling someone to “sound more confident” only amplifies unsupported claims. Reviewers recognize this immediately.

Why This Advice Persists

These tips survive not because advisors are careless, but because they are:

- Easy to give

Generic advice avoids engaging with the actual study. - Psychologically comforting

They create a sense of action without requiring structural change. - System-neutral

They avoid explaining how incentives shape what gets published.

But advice that ignores workflow often makes research harder, not easier.

What Works Instead

Instead of “read more papers”:

→ Read with a stopping rule.

Instead of “write every day”:

→ Write when the logic stabilizes.

Instead of “focus on impact”:

→ Focus on containment—what the data can truly support.

Instead of “be more confident”:

→ Position clearly so reviewers know how to judge the work.

These aren’t motivational slogans.

They’re constraints that allow research to function.

Advice vs. Workflow

Most research advice is motivational, not operational. It assumes the problem is insufficient effort. In reality, research failures usually come from misallocated effort—working hard at the wrong stage, on the wrong task.

Workflow thinking doesn’t reduce effort. It makes effort legible—so you can see what moves the project forward and what quietly stalls it.

What’s Next

This perspective becomes concrete in the Practice section:

- how I decide what belongs in a discussion—and what to exclude

- how I revise a rejected paper without spiraling

- when I stop improving and submit

These aren’t theories. They’re decisions made under real constraints—where generic advice fails, and workflow discipline starts to matter.

Comments

One response to “Why Most Research Advice Is Misleading”

[…] Why This System Produces Misleading Advice […]