Most research projects don’t fail because the analysis is wrong. They fail much earlier—at the moment the study design is chosen.

It was during my second year of residency, when we were required to present our research proposals to the faculty. There were six of us in that session. By the end of it, half of the proposals were rejected—not because the topics were trivial, but because the study designs could not actually support the questions being asked.

That experience stayed with me. It taught me that design failure rarely announces itself early. It only becomes obvious once the reasoning is made explicit—and by then, many projects are already too far along to change course.

The research question looks reasonable. The literature review is thorough. The methods section sounds sophisticated. Reviewers may even compliment the topic.

Then, months later, the project runs into a quiet but fatal realization: the study was never capable of answering the question it set out to ask. This isn’t a technical mistake. It’s a workflow mistake.

The illusion of a “good” research question

Early-stage researchers are often told to start with a good research question. (Sounds seem familiar, right?)

What they’re rarely told is that a good question means very little on its own. A question only becomes meaningful when it meets a design that can carry it.

Some patterns show up again and again:

- a question that asks about causality, paired with a purely descriptive design

- a question that depends on long-term outcomes, but only short-term data are available

- a question that assumes precise measurement where only rough proxies exist

- a question that sounds innovative, until ethical constraints make it impossible to execute

None of these questions are inherently bad. They simply can’t survive under the real conditions of the study. The mistake isn’t asking the wrong question. It’s failing to ask whether the question can survive contact with reality.

Seeing the limits early

When I was working on my thesis, I read several papers that looked very close to what I wanted to do. On the surface, it felt reassuring—others had done something similar, so mine should work too.

But placing the idea into the context of my own workplace changed everything.

Even before collecting any data, I could already see where the problems would appear: selection bias from how patients were referred, information bias from incomplete records, and the usual limitations that come with real-world clinical data. (That time was my very first clinical trial)

More importantly, I could anticipate what reviewers would likely point out—and which statistical adjustments I would need to at least acknowledge, even if I couldn’t fully fix them.

This wasn’t because I was particularly insightful. It was simply because I spent time thinking through what my design could and couldn’t handle before committing to it.

That step alone saved me from pursuing questions my study was never equipped to answer.

Design is constraint-solving, not optimization

Many researchers approach study design as an optimization problem: What is the best design for this question?

In practice, that framing is misleading. The more useful question is:

What design can reasonably answer this question under my actual constraints?Every study sits at the intersection of five forces:

- the question itself

- available data

- time

- ethical limits

- resources—people, funding, access

Design is not about ignoring these constraints in pursuit of an ideal. It is about negotiating with them honestly. And believe me, you should deliver this message through your thesis. Bad results are not bad, bad results with good and honest interpretation are real science.

This is why two researchers can ask similar questions and choose different designs—both appropriately. A design isn’t wrong because it isn’t ideal. It’s wrong only when it pretends constraints don’t exist.

Aligning questions with designs (without worshipping methods)

At the workflow level, you don’t need an exhaustive list of methods.

You need a rough alignment between what you’re asking and what your design can support.

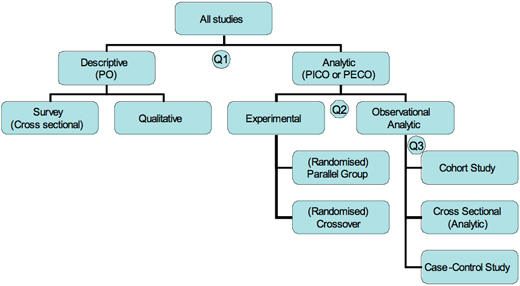

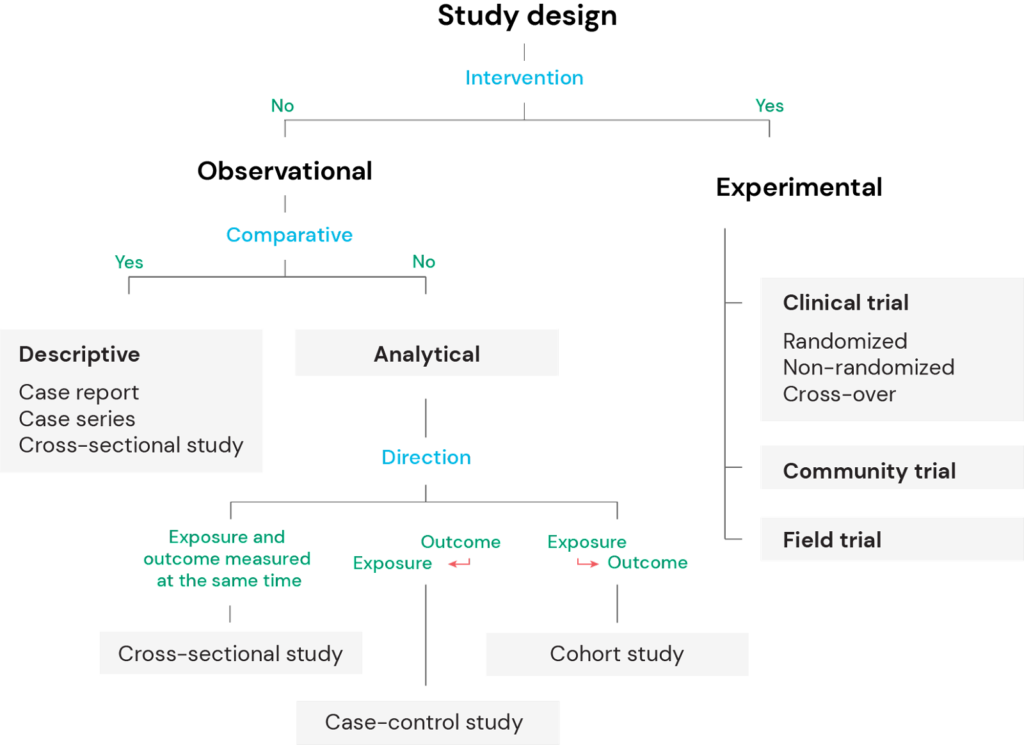

- Descriptive questions—What does this population look like?→ case series, cross-sectional studies

- Association questions—Are X and Y related?→ cohort, case–control, or cross-sectional designs

- Causal questions—Does X cause Y?→ randomized trials or quasi-experimental designs

- Prediction questions—Can we predict Y from X?→ modeling and validation studies

Problems arise when this alignment is ignored—not because of ignorance, but because of aspiration.

Researchers often choose designs based on journal prestige, perceived rigor, or what looks advanced. But a mismatched design doesn’t become valid through statistical sophistication.

Where projects quietly break

Certain failure points repeat themselves across disciplines:

- The design is chosen while the question is still unstable

- Outcomes are defined after the design, turning measurement into an afterthought

- Bias is addressed late, as a checklist item rather than a structural issue

- Interpretation is never anticipated—results are produced without asking what claims would actually be defensible

These failures aren’t random.

They reflect a workflow that treats design as a formality, rather than a decision that shapes everything that follows.

Design as commitment

Choosing a design isn’t just a technical step. It’s a commitment.

Once the design is fixed, you are implicitly agreeing to:

- what you can legitimately claim

- what you must remain silent about

- what kinds of reviewers you will face

- what criticisms you are likely to encounter

This is why experienced researchers tend to pause longer at this stage. They understand that design locks in both power and limitation. A good workflow makes this commitment explicit—early, deliberately, and with eyes open.

What comes next

Once the design is chosen, the central question changes:

What exactly am I measuring—and what am I blind to because of that choice?

This is where many studies that look solid on paper begin to weaken in practice.

And that is where we turn next.