Red-Teaming Your Study Design with Claude 3

The most expensive mistake in clinical research is discovering a fatal methodological flaw after data collection is already complete. At that point, no amount of statistical wizardry or post-hoc correction can save the study. You are stuck trying to write a Discussion section that apologizes for a fundamentally broken design, knowing full well the paper will face rejection after rejection.

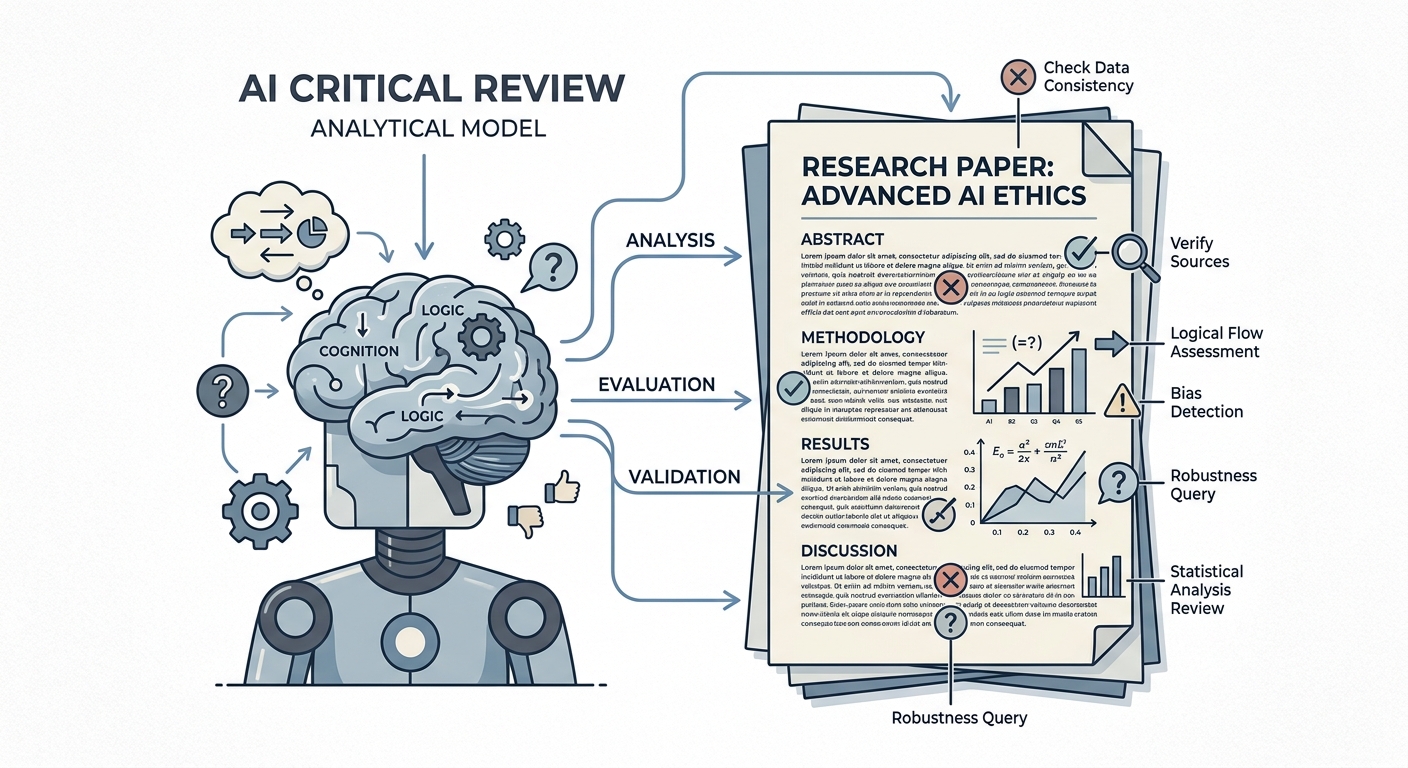

In software engineering and cybersecurity, teams use a practice called "red-teaming." A red team is an independent group of experts explicitly tasked with attacking a system to expose its vulnerabilities before it goes live to the public.

In clinical research, our red team is usually Reviewer 2. The problem is that Reviewer 2 arrives a year too late.

You need a red team before you even submit your protocol to the Institutional Review Board (IRB). Fortunately, you can use Large Language Models—specifically Claude 3—to red-team your study design.

The Danger of Optimism Bias

When you design a study, you inherently suffer from optimism bias. You are excited about the hypothesis. You assume that patients will enroll at the predicted rate, that follow-up will be nearly 100%, and that confounding variables will magically distribute themselves evenly between your groups.

Because you built the logic of the study, your brain is wired to defend it, not to break it. You need an adversarial perspective to shatter that optimism and reveal the structural weaknesses of your protocol.

LLMs, particularly Anthropic's Claude 3 (which is heavily optimized for logical reasoning, nuance, and structural analysis), excel at adversarial thinking if prompted correctly. Unlike earlier AI models that suffered from sycophancy—meaning they were trained to be polite and tell you your ideas were brilliant—Claude can be instructed to be aggressively critical.

Constructing the Red-Team Prompt

Once you have drafted your initial protocol (including your PICO framework, proposed methodology, inclusion/exclusion criteria, and primary endpoints), do not just ask Claude to "review" it. Asking for a review invites polite suggestions. Ask it to destroy it.

Here is the exact prompt framework I use to red-team my protocols:

"Act as a highly critical, adversarial statistical reviewer and methodologist for a top-tier medical journal (e.g., The Lancet, JAMA). I am going to provide my proposed study design and protocol. Your task is to red-team this protocol.

I do not want compliments. I want you to identify all potential methodological flaws, uncontrolled confounding variables, selection biases, and measurement errors. Point out exactly why this study design might fail in the real world or be rejected during peer review.

Structure your critique as follows:

- Fatal Flaws: Issues that would guarantee rejection.

- Confounding Variables: What variables have I failed to control for?

- Operational Risks: Where will this fail during actual data collection?

Give me your most ruthless critique."

Synthesizing the Critique: A Case Example

Imagine you submit a protocol for a retrospective cohort study comparing a new surgical technique to a traditional one. You feed it into Claude using the prompt above.

When Claude returns the critique, read it with your ego checked at the door. It will likely point out issues you have genuinely overlooked:

- Selection Bias (Confounding by Indication): "You are comparing two techniques retrospectively, but you have no mechanism to control for selection bias. Surgeons likely chose the new technique for healthier patients and the traditional technique for complex cases. Without propensity score matching, your outcomes will be irreparably skewed."

- Measurement Error: "Your primary endpoint relies on subjective patient-reported pain scores from chart reviews. Retrospective pain scores are notoriously unreliable and poorly documented. This endpoint is too fragile for a primary outcome."

It may also hallucinate or raise concerns that are clinically irrelevant in your specific context. Your job is not to blindly accept every critique, but to use them as a rigorous stress test. If Claude flags a bias, ask yourself: Could a human reviewer make this exact same argument? If the answer is yes, you must fix it now.

Fixing the Flaws When It Still Costs Nothing

The beauty of red-teaming with AI is that it occurs when the study exists only on paper.

Fixing a confounding variable in a Word document—by adding propensity score matching to your statistical plan or tightening your exclusion criteria—takes ten minutes. Fixing that same confounding variable after you have enrolled 100 patients and spent six months collecting data is impossible.

By inviting the harshest criticism early, you ensure that by the time your paper actually reaches Reviewer 2, you have already anticipated their objections and built the defenses directly into your methodology.

Tools mentioned in this article include Claude.